- Published on

Reddit Killed Self-Service API Keys: Your Options for Automated Reddit Integration

- Authors

- Name

- Chris

- @molehillio

Introduction

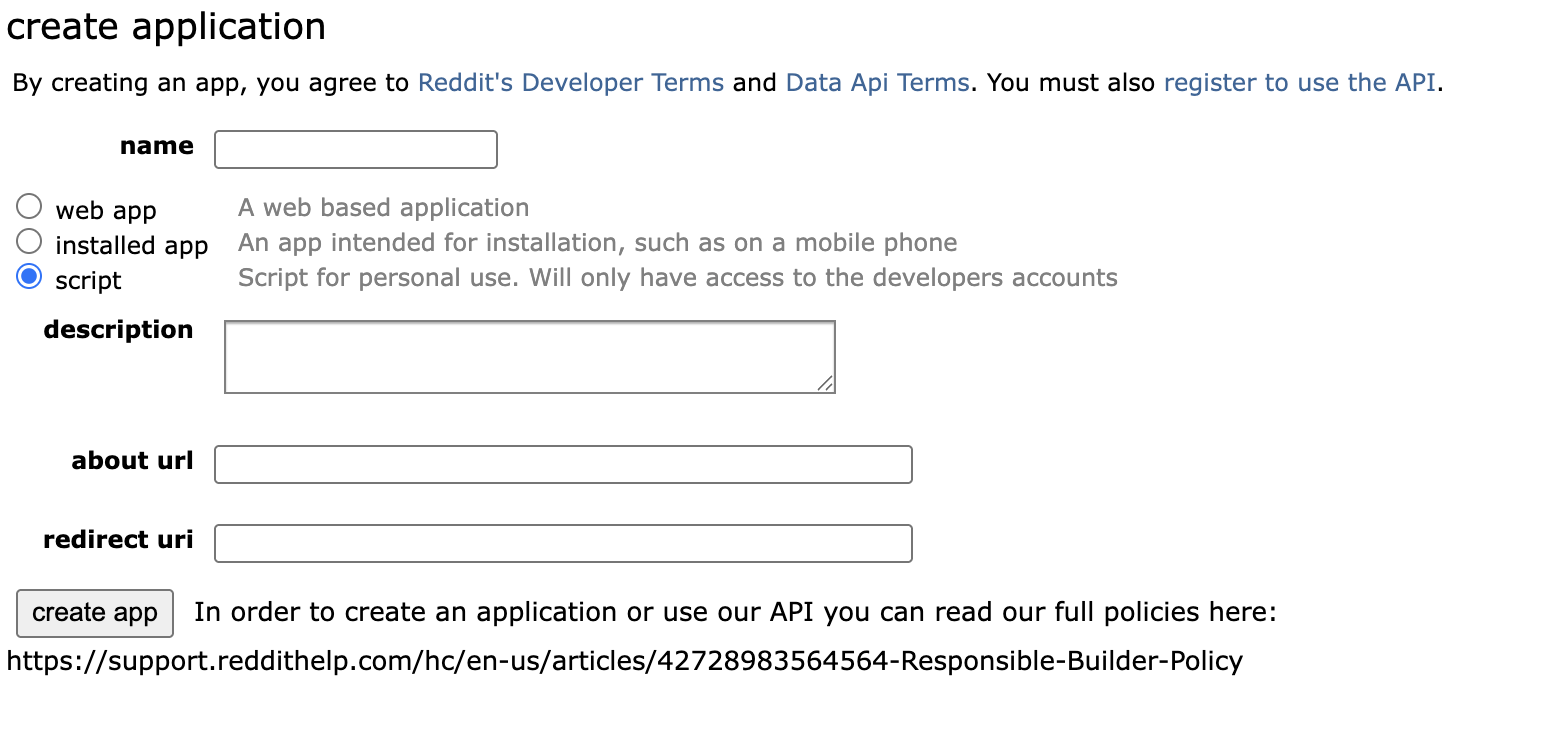

If you have tried to create Reddit API credentials recently, you have probably hit the infamous error message:

In order to create an application or use our API you can read our full policies here:...

If it's the first time you are seeing this, know that you are not alone.

Across r/redditdev, r/n8n, and other developer communities, there has been a flood of confused posts from people hitting this exact wall. Some get the "0 applications" loop. Others get a flat 403 Forbidden when their existing automations try to reach the API from server IPs. As it turns out, Reddit quietly ended self-service API access in November 2025, and a lot of automation workflows broke as a result.

In this article, we break down exactly what changed, why Reddit did it, and most importantly, what your options are now. Whether you use n8n, Make, Zapier, PRAW, or any other automation tool, these changes affect you.

If you have followed our Reddit question scraper tutorial for n8n or the Make version, you may already be wondering how this affects your workflow. Keep reading.

This article contains affiliate links. If you sign up through our links, we may earn a commission at no extra cost to you.

What Happened

The Timeline

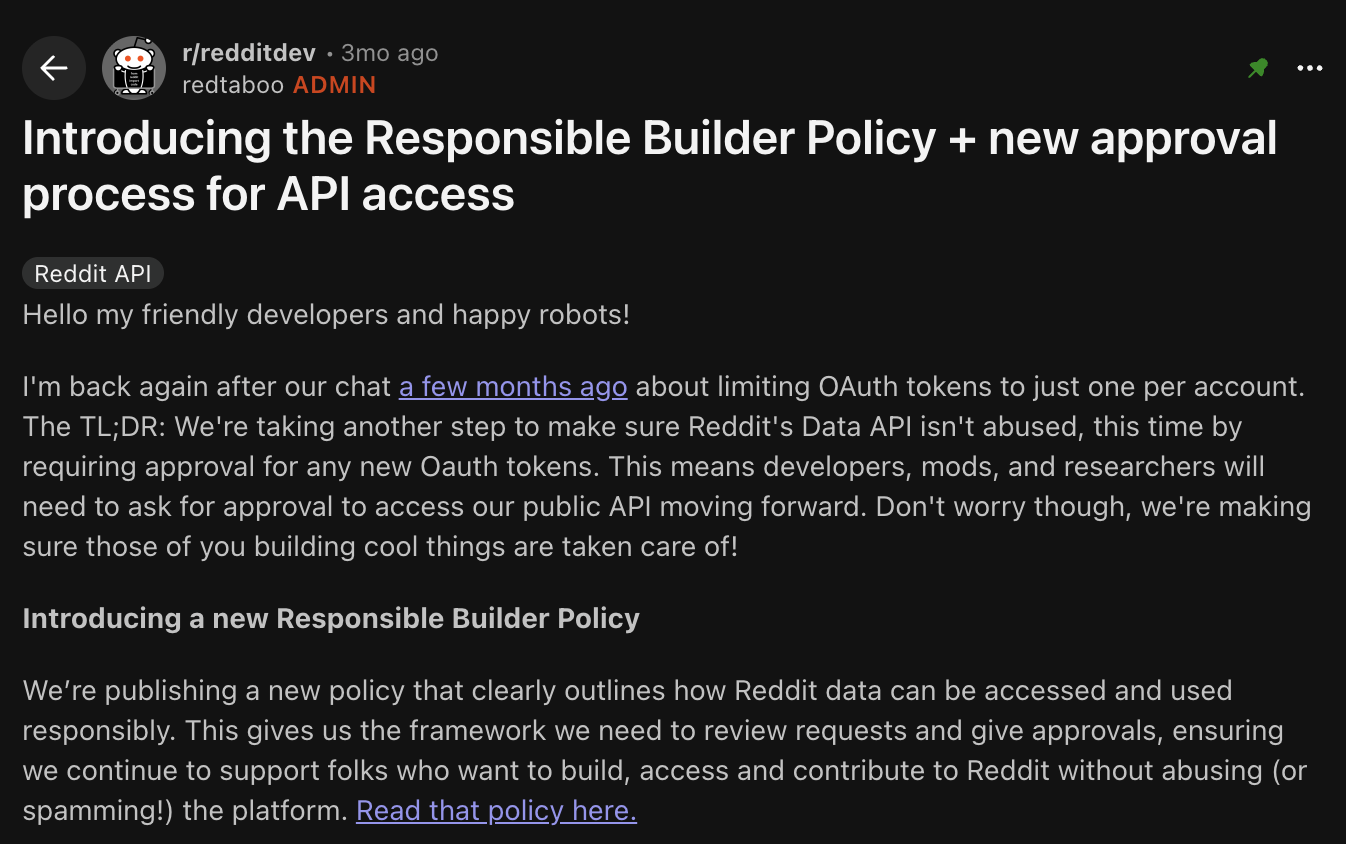

In early November 2025, Reddit published a post in r/redditdev introducing the Responsible Builder Policy and a completely new approval process for API access. At the same time, they updated their official help documentation to reflect the new rules.

The news hit like a bombshell for indie devs and automation fans: self-service API access was discontinued immediately. The old workflow of going to Reddit's "preferences > apps" page, clicking "create app," and instantly getting OAuth credentials? Gone.

What Specifically Changed

Previously, any logged-in Reddit user could create an OAuth app in seconds. You would get a client ID and secret, plug them into your automation tool, and start making API calls. No review or process, just a straightforward web form.

Under the new system:

- All new OAuth tokens require pre-approval. You cannot create a new app and start using the API without Reddit manually reviewing and accepting your application.

- The Responsible Builder Policy is the highest law. This policy defines what Reddit considers "responsible" use of their data, covering developers, moderators, researchers, and bots.

- Existing apps and tokens still work (for now). As long as you comply with Reddit's policies, you should in theory be OK. But be careful, as you cannot create new credentials for new projects through the old flow.

Why Reddit Made This Change

Reddit frames this as a move to prevent abuse of the Data API. Their stated goals include:

- Reducing spam-bots, particularly LLM-powered automated accounts that flood subreddits with trash-tier content

- Limiting large-scale scraping as data is now basically the new gold

- Supporting "good actors" like moderation tools, research projects, and useful bots through a more "formal" review process

There is no official statement that this is about monetization, but the practical effect is clear: Reddit now decides who gets in, and the bar is significantly higher than it used to be.

What This Means for Automation Users

If you use any automation platform that connects to Reddit via OAuth, this affects you directly. That includes:

- n8n's built-in Reddit node which requires OAuth credentials to function

- Make's Reddit module which has the same story (and our OAuth setup guide for Make walks through a process that is no longer available to new users!)

- Custom scripts using PRAW or similar Reddit API wrappers

- Zapier, Pipedream, or any other tool that uses Reddit's OAuth API

If you already have valid OAuth credentials from before November 2025, you are fine for now. They still work. But if you are starting fresh, or need credentials for a new project, you will need to choose an alternative.

Option 1: Apply Through the Official Process

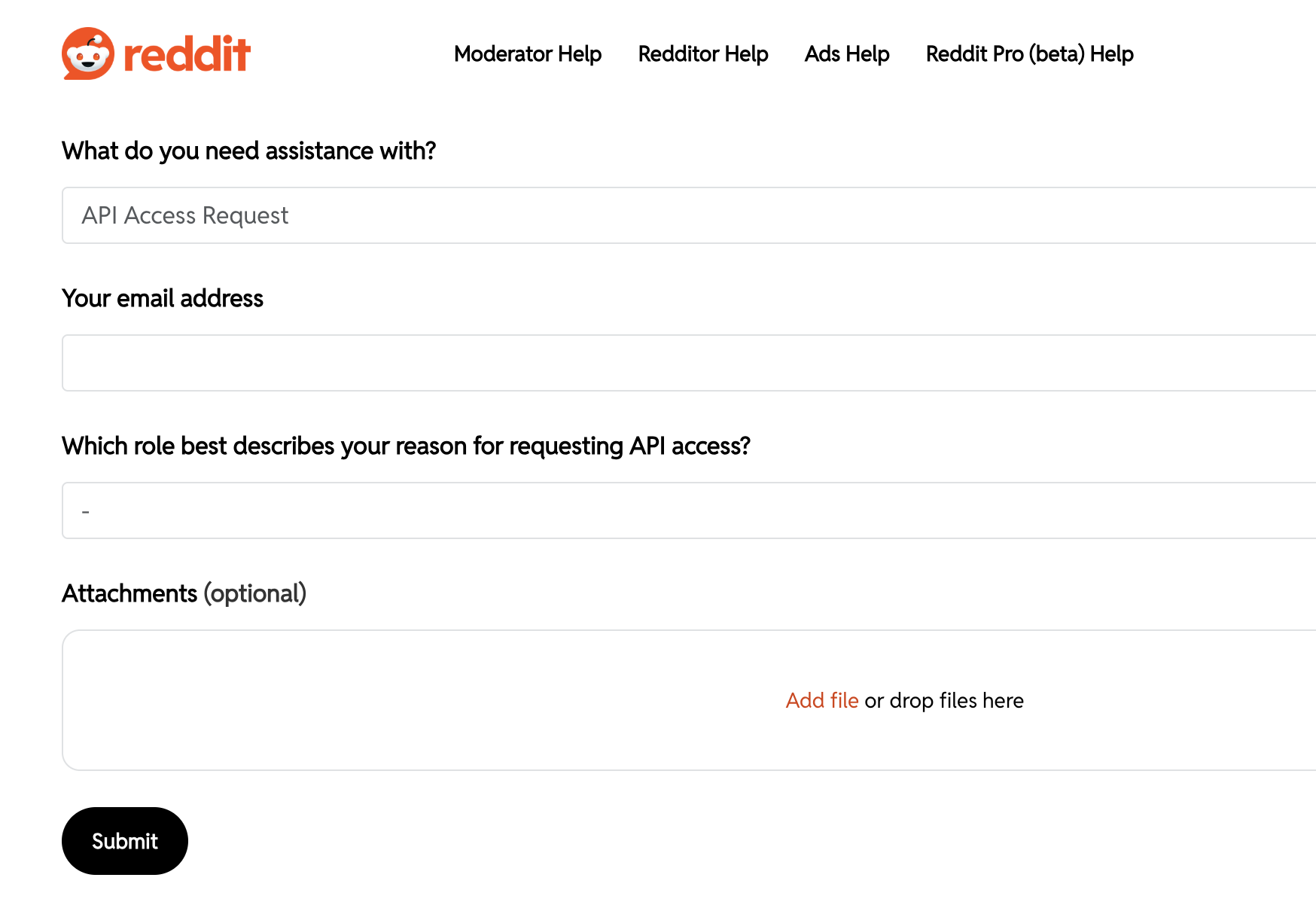

The "official" path is to apply for API access through Reddit's new approval system. Applications are submitted via Reddit's Developer Support form — that is where you have to apply. You are meant to start by reviewing the Responsible Builder Policy and submitting an application that describes your use case, the data you need, which subreddits you will interact with, and your expected request volume.

Reddit says their target is a seven-day response time for most applications. If approved, you will receive OAuth credentials that should work with any automation tool (n8n, Make, Zapier, etc).

Sounds straightforward. But in practice, it is anything but!

The Application Gauntlet

We looked into it, and many developers report being rejected with just a generic message:

Submission is not in compliance with RBP and/or lacks necessary details

There is often little actionable feedback on what to fix, which is obviously frustrating.

Here are some real examples from the community:

- Academic researchers have been rejected for missing formal ethics approval letters or university-level backing, even when their research is legitimate and small-scale.

- Small commercial SaaS tools (things like scheduling or analytics products) are usually rejected outright. The alternative is to use the Enterprise tier, which starts at around $10,000 per month. Not exactly indie-hacker friendly.

- Personal analytics tools have been rejected and told to rebuild inside Devvit because they didn't provide enough details about their not-yet-built app.

What Actually Gets Approved

Based on community reports, there's a general theme. Personal scripts and bots face the highest approval difficulty (most are not approved), so it's best to consider Devvit or the alternative methods discussed below. Academic research applications have a medium chance of approval, but you will need to apply through Reddit for Researchers and include all necessary ethics documentation. Moderator tools are somewhat more likely to be approved, especially if you file a Developer Support Ticket and clearly identify your tool as being for moderation purposes. Commercial use, on the other hand, is effectively impossible for most businesses unless you have an Enterprise-level budget (typically $10,000 or more per month). Notably, moderators of subreddits with over 100,000 subscribers report the highest success rate in getting access.

Tips for a Strong Application

If you do decide to apply, developers who have been approved recommend doing the following:

- Include a privacy policy with a live link explaining how you handle Reddit's 48-hour data deletion requirement

- For researchers: attach a PDF of your institutional ethics board approval

- Include a video walkthrough showing the app's UI and explaining why it specifically needs Reddit data

- Be extremely specific about scope: which subreddits, what data, what actions, and expected request volume

Even with all of this, approval is not guaranteed. If you do decide to try it... good luck!

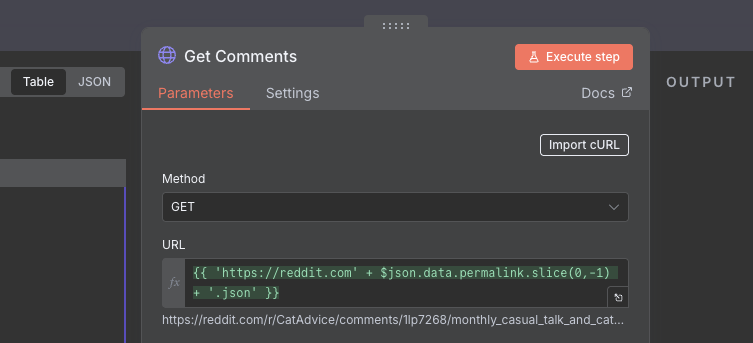

Option 2: Use Reddit's Public JSON Endpoints

It's not a well-known fact, but a lot of Reddit's content is publicly accessible without any API credentials at all. You can append .json to nearly any Reddit URL and get structured JSON data back.

For example:

https://www.reddit.com/r/automation/new.json- latest posts in a subreddithttps://www.reddit.com/r/n8n/comments/abc123.json- a specific post and its commentshttps://www.reddit.com/user/someuser.json- a user's public activity

You can call these directly from any HTTP client: n8n's HTTP Request node, Make's HTTP module, a Python requests call, or even just a browser. No OAuth tokens (or applications) needed.

We used exactly this approach in our Reddit question scraper tutorials for n8n and Make - and it still works!

Limitations

- Read-only. You cannot post, comment, vote, or perform any account actions. This is strictly for consuming public data.

- Rate limited to approximately 10 requests per minute for unauthenticated access. That is enough for periodic monitoring, but not for anything high-volume.

- Anti-scraping measures. If your requests come from data-center IPs or look like automated scraping, Reddit may block them. Using a residential IP or adding appropriate delays helps.

WARNING

Don't mess about with the rate limits, and use sleeps, or delays, or other methods to stay within the limit. It is very easy to screw up, and get IP banned or worse!

Best For

Monitoring subreddits for mentions, tracking trends, pulling content for analysis, triggering alerts when specific keywords appear. If your use case is "I want to read Reddit data on a schedule," this is probably all you need.

Option 3: Browser Automation

If you need to post, comment, or perform account actions and cannot get approved API credentials, browser automation is an option, though not one we would recommend.

Tools like Playwright, Puppeteer, or Selenium can be wrapped behind an HTTP endpoint and called from your automation platform. Some no-code RPA services offer similar capabilities with their own APIs.

How It Works

- Run a headless browser (self-hosted or via a cloud service)

- Log in to Reddit with a real account

- Script the browser to navigate to pages, fill in forms, click buttons - just like a human would

- Expose the actions as HTTP endpoints that your automation tool can call

Downsides

WARNING

Much like exceeding rate limits, browser automation can run the risk of getting your account banned if Reddit doesn't like what you are automating.

- It's brittle. When Reddit changes their UI (which they do regularly), your automations break.

- Way slower than the API.

- Ban risk. If your behaviour looks spammy or exceeds normal human interaction patterns, Reddit will ban the account. Their bot detection is aggressive, and they explicitly prohibit automated access that violates their terms.

Best For

Users who absolutely need write access to Reddit (posting, commenting) for legitimate purposes and have been unable to get approved through the official process. We would generally advise against this, and working on getting proper approval or going through Devvit.

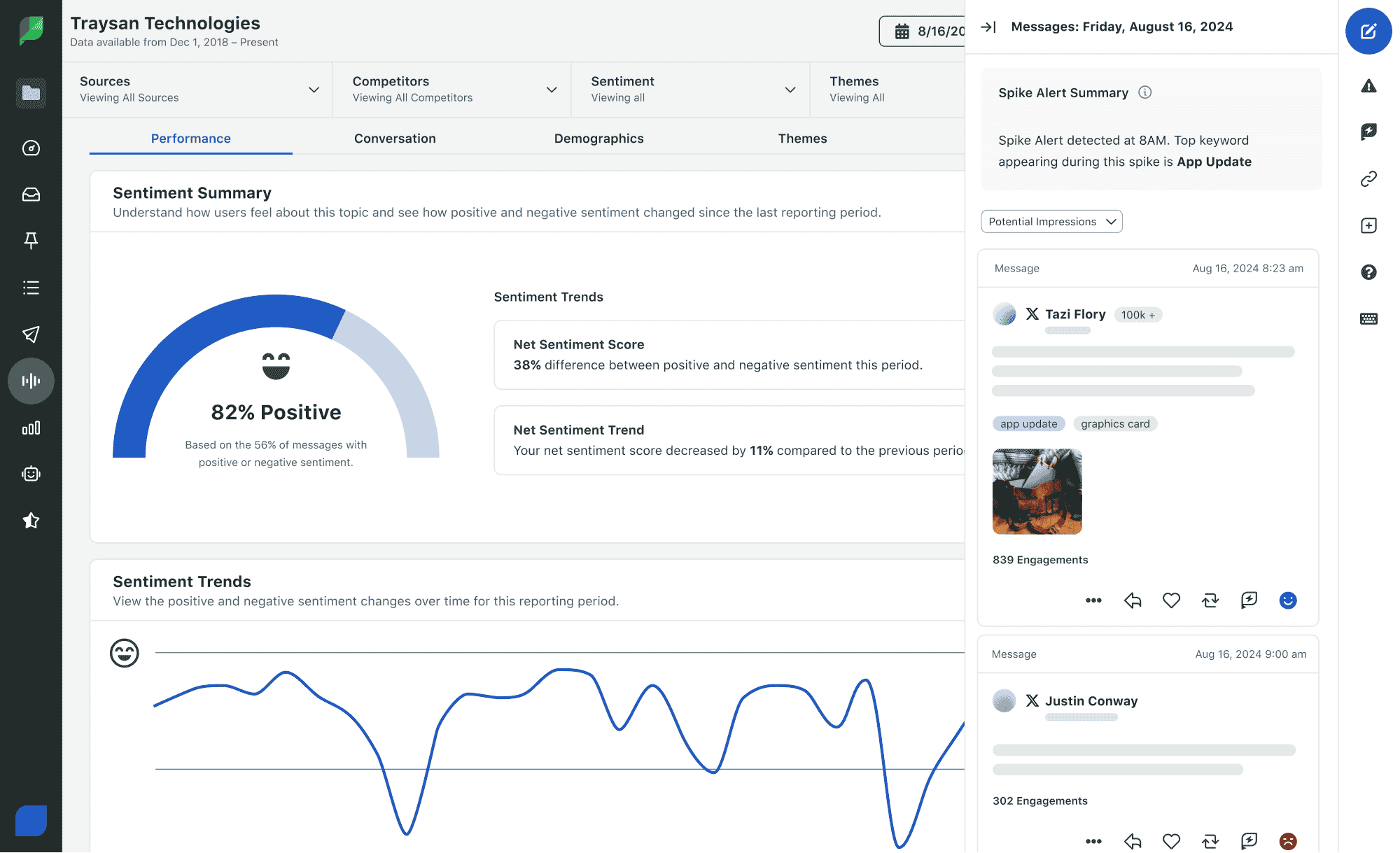

Option 4: Third-Party Reddit Data Services

Several companies have already approved Reddit API access (or are official Reddit Data Partners) and expose their own APIs or webhook integrations. Rather than dealing with Reddit's approval process yourself, you piggyback on their access. Examples include Sprout Social (which has an official Reddit partnership for social listening), established platforms like Meltwater and Brandwatch, and specialized tools such as KWatch that offer Reddit monitoring with API webhooks.

Limitations

- Usually read-only. Most data services focus on monitoring and analytics, not posting or engagement.

- Paid. Subscriptions range from modest to significant depending on volume and features.

- You are dependent on the provider maintaining their Reddit API access. If their access gets revoked, your integration breaks too.

Best For

Teams that need reliable Reddit monitoring or analytics at scale and have budget for a SaaS tool. This is the most "hands-off" option if you want data without dealing with Reddit's API directly. However, be prepared to pay.

Option 5: Devvit (For Moderators)

Reddit is actively pushing Devvit, its official platform for building apps that run directly on Reddit's infrastructure. Devvit apps integrate with subreddits and can automate moderation tasks, trigger actions on events, and interact with posts and comments - all without requiring external API keys.

How It Works With Automation Tools

The interesting part for automation users: Devvit apps can call external webhooks and HTTP endpoints. This means you can build the Reddit-facing logic inside Devvit and have it trigger workflows in n8n, Make, or any other tool that can receive HTTP requests.

For example:

- A Devvit app monitors your subreddit for new posts matching certain criteria

- When a match is found, the app sends a webhook to your n8n instance

- n8n processes the data, runs it through an AI model, posts to Slack, logs to a spreadsheet - whatever your workflow needs

Limitations

- Only works within subreddits you moderate. Devvit apps are scoped to specific communities.

- Subject to Devvit's own review process and rules, though approval rates are significantly higher than the general API application process.

- Different programming model. You are building a Devvit app (TypeScript), not just configuring an API connection.

Best For

Moderators who want to automate within their own subreddits and are comfortable with (or willing to learn) the Devvit framework. If you run a community and need automation, this is the path Reddit actually wants you to take. However, since it is only for moderators, the actual utility for anyone else is pretty limited.

Option 6: Use Existing Grandfathered Credentials

If you created an OAuth app before the November 2025 cutoff and it still complies with Reddit's policies, your credentials continue to work. This is the simplest "option", but only if you already have them.

You still have the original 100/s rate limits, but this is by far better than not having it! Be careful though, if you lose it by being banned or by having your credentials revoked, there is no way to get them back or get new ones.

Best For

Anyone who had the foresight (or luck) to create Reddit API credentials before the policy change. Honestly, I would keep these credentials as safe as possible. I wouldn't be surprised if down the line having one of these grandfathered credentials will be quite valuable!

What We Recommend

There isn't really a cut-and-dried answer here. It really depends on what you want to do.

If you just need to read Reddit data (monitoring mentions, tracking trends, pulling content for analysis), Option 2 (JSON endpoints) is the easiest and most reliable path. In fact, it's the one we use also as it is reliable and free. Check out our step-by-step tutorials for n8n and Make to see this approach in action.

If you need write access and have a legitimate use case, it is worth trying Option 1 (the official process). Go in with a strong application: be specific, include a privacy policy, and keep your scope narrow. Just be prepared for the (likely) possibility of rejection.

If you are a moderator, Option 5 (Devvit) is purpose-built for you and has the smoothest approval path. Reddit wants you using Devvit, so just go with it.

Finally, if you already have OAuth credentials from before the change, keep using them and stay compliant. They are really valuable now.

Whatever your situation, the automation landscape around Reddit has clearly changed. The days of spinning up a quick API key in 30 seconds are over, but there are still viable paths forward for every use case.

Frequently Asked Questions

Can I still use the Reddit API for automation in 2026?

It depends on what you already have and what you need. If you created OAuth credentials before November 2025, they still work and you can continue using them. If you are starting fresh, self-service API key creation is no longer available. New access requires applying through Reddit's Developer Support form and waiting for manual review. For read-only use cases, the public JSON endpoints (appending .json to any Reddit URL) remain available without any credentials.

What is the Reddit Responsible Builder Policy?

The Responsible Builder Policy (RBP) is the framework Reddit introduced in November 2025 that governs all API access. It defines what Reddit considers acceptable use of their data and API, covering developers, researchers, moderators, and bots. Under this policy, all new OAuth applications require pre-approval. Reddit stated the goals as reducing spam bots, limiting large-scale data scraping, and supporting "good actors" through a more structured review process.

Do existing Reddit OAuth credentials still work after the November 2025 change?

Yes, for now. Credentials created before the policy change continue to function as long as the account they are associated with remains compliant with Reddit's policies. The key risk is that if those credentials are ever revoked or the app is banned for policy violations, there is no straightforward path to replace them under the new system. Treat existing pre-November 2025 credentials as a valuable resource and keep them safe.

How do I read Reddit data without API credentials?

Append .json to any public Reddit URL. For example, https://www.reddit.com/r/automation/new.json returns the latest posts from that subreddit as structured JSON data. This works from any HTTP client, including n8n's HTTP Request node or Make's HTTP module, with no authentication required. The limit is around 10 requests per minute for unauthenticated access, and it is read-only. We use exactly this approach in our n8n Reddit scraper tutorial.

What are the chances of getting Reddit API access approved?

It varies significantly by use case. Moderators of large subreddits (100,000+ subscribers) report the highest approval rates. Academic researchers with proper ethics documentation have a moderate chance. Personal scripts and bots are rarely approved. Small commercial tools are almost always rejected unless the builder is prepared to pay for Enterprise access ($10,000/month or more). The application process is known to return vague rejection messages with little guidance on how to improve a submission.

What is Devvit and can it replace Reddit OAuth for automation?

Devvit is Reddit's official developer platform for building apps that run directly on Reddit's infrastructure. It is primarily for subreddit moderators who want to automate moderation tasks within their own communities. Devvit apps can send webhooks to external tools like n8n or Make, which means you can trigger external workflows from Reddit events without needing your own OAuth credentials. The limitation is that Devvit is scoped to subreddits you moderate, so it does not help for monitoring subreddits you do not run.